Objectives of Periodic Review

The primary goal of a periodic review is to verify that a computerised system remains in a validated state and continues to meet its intended purpose within the GxP environment. More specifically, reviews are designed to assess whether changes made since the last review have impacted the validated state or regulatory compliance, and whether any remedial actions—such as partial or complete revalidation—are necessary.

Periodic reviews are also an opportunity to analyze how system changes, deviations, or maintenance activities might affect product quality, data integrity, or patient safety. Each review should be fully documented, and the findings must be critically analyzed. If any risks are identified, corrective and preventive actions (CAPAs) should be initiated and tracked. This proactive approach enables companies to avoid recurring issues and manage their systems in a more resilient and compliant manner.

Tip: Assign a responsible owner (e.g., system owner or QA rep) to initiate and close each periodic review. Tracking review schedules in a central register is a best practice.

Scope of the Review

Reviewing Changes

A thorough periodic review begins with an evaluation of all changes made to the system since the last review cycle. This includes modifications to hardware, software, configuration settings, infrastructure components, and external interfaces. Even minor upgrades or patches should be considered, especially if they involve security updates or performance improvements.

Documentation must also be scrutinized. Any change to user requirements, functional specifications, operating procedures, or training materials should be reflected in the system's documentation suite. One overlooked area is the cumulative impact of several small changes—individually minor, but collectively capable of significantly altering system performance or risk posture.

It is also important to consider that external factors such as regulatory updates or changes to applicable guidance documents may impact the system's validated state. Regulations evolve over time, and staying aligned with current expectations is a critical component of compliance.

Tip: Use change logs and version control histories to identify changes and cross-check them against documentation updates. Employ configuration auditing tools where possible to detect unauthorized or undocumented changes.

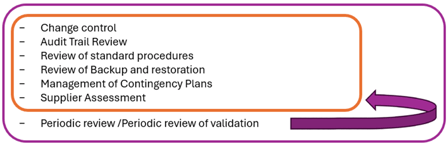

Follow-Up on Supporting Processes

In addition to change reviews, periodic assessments should cover all supporting processes linked to the system. This includes confirming that actions from previous periodic reviews, audits, or CAPAs have been effectively addressed. Audit trail and access reviews are crucial, as is re-evaluating user permissions to detect potential segregation of duty risks or orphaned accounts.

Security incidents and emerging threats must be considered, particularly for internet-connected or cloud-hosted systems. Maintenance activities, service agreements, and vendor contracts should also be examined for continued adequacy. Backup and restore procedures, disaster recovery plans, and archival routines should be tested and verified to ensure data availability and protection.

Finally, it’s critical to assess whether new regulatory requirements or guidance updates (like the proposed revision of Annex 11) have introduced compliance gaps that need to be closed.

Tip: Develop a periodic review checklist tailored to your system’s risk classification, incorporating all these areas to ensure nothing is missed.

Frequency of Periodic Reviews

The frequency at which periodic reviews should be conducted must be defined based on a risk-based approach. Systems with a higher impact on product quality or patient safety—such as electronic batch record systems, manufacturing control systems, or laboratory information management systems (LIMS)—should be reviewed more frequently, typically annually.

Lower-risk systems, such as training platforms or document repositories, may justify a longer interval, such as every two to three years. Regardless of the interval, each system should have a documented periodic review plan, and a final review must always be conducted when the system is decommissioned.

Tip: Align periodic review frequencies with internal audit schedules where possible to streamline compliance activities.

Process for Performing a Periodic Review

The periodic review process can be broken down into five practical steps:

Step 1: Initiation

A periodic review is typically triggered by a calendar-based schedule or a significant change to the system or its environment. The review team should include representatives from IT, Quality Assurance (QA), and the business process owner. This cross-functional team ensures both technical and regulatory aspects are properly evaluated.

Step 2: Documentation Review

Collect and examine all relevant documentation, including validation deliverables (e.g., URS, test scripts, traceability matrices), change controls, deviations, CAPA reports, and training records. This helps establish a clear picture of what has changed and whether updates were properly controlled and documented.

Step 3: Technical System Assessment

Perform hands-on or tool-based checks of system configurations, user access rights, backup records, audit trails, and system logs. If applicable, perform a sample audit trail review to check for anomalies or non-compliance. Verify whether restore tests have been executed as per the backup plan.

Tip: Use automated tools for audit trail analysis and log parsing to improve coverage and reduce review time.

Step 4: Risk and Compliance Evaluation

Using the documentation and technical findings, reassess the system’s current risk level and determine whether it still aligns with its validated state. Pay special attention to data integrity controls such as ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate). Identify any deviations from current regulatory expectations, especially if new guidance has been published since the last review.

Step 5: Reporting and Closure

Compile the review findings into a formal report. The report should summarize all reviewed elements, document any required corrective actions, and conclude on the validated state of the system. It should be reviewed and approved by QA and other relevant stakeholders. All actions should be tracked to closure before the review is considered complete.

Examples of Systems and Key Review Areas

| System Type | Examples | Review Focus Areas |

|---|---|---|

| ERP | SAP, Oracle | User access, change control, audit trail completeness |

| LIMS | LabWare, STARLIMS | Integration with instruments, method versioning, audit logs |

| QMS | TrackWise, Veeva | Deviation management, document control, workflow status |

| eValidation | Kneat, ValGenesis | Closed validations, digital signatures, template integrity |

| Infrastructure | Windows/Linux servers | Patch level, user accounts, antivirus and firewall settings |

Tip: Maintain a risk-based inventory of all computerised systems and link them to their respective review frequencies and responsible owners.

Best Practices for Effective Reviews

- Use a centralized tracking system to manage periodic review schedules and store outcomes.

- Adopt standardized templates to ensure consistent documentation across systems and sites.

- Involve all relevant stakeholders, including system users, who can provide context on how the system is used in real-life scenarios.

- Ensure training records of system administrators and users are reviewed to confirm ongoing competence.

- Leverage tools for automated monitoring where possible to reduce manual effort.

Common pitfalls to avoid

- Conducting the review as a formality without in-depth technical evaluation.

- Ignoring the potential impact of small but cumulative changes.

- Failing to address or track findings from previous reviews.

- Overlooking changes to external regulations or guidance.

- Missing undocumented changes due to lack of configuration management.

Conclusion

Periodic reviews are a foundational component of maintaining computerised systems in a validated state. As regulatory expectations evolve—particularly with the proposed revisions to Annex 11—it becomes even more critical to implement a structured, risk-based, and well-documented approach to system lifecycle management. Organizations that proactively embrace comprehensive periodic reviews will not only ensure ongoing compliance but also increase the robustness, reliability, and performance of their computerised systems.

About the Author

About the Author